The Evolution of SEO – Where have we come from and where are we going to?

So you think you know SEO?

For the uninitiated, SEO might at first glance appear as simple as it sounds: Search Engine Optimisation – what more could there be to know about than making sure your website looks good and works well? As long as you’ve got your keywords in place, a few good links and your site is mobile optimised, you’re good, right?

Let’s delve a bit deeper – SEO is scientific: it looks at the psychology of consumers; it involves sometimes complex mathematics; it gets anthropological; we delve into sociolinguistics; it even gets a little philosophical at times. But SEO is also an art form: it takes creativity, enthusiasm, design and a dash of imagination to get the results you need.

Read more: How Google AI Overviews & AI Mode Will Impact Your Traffic in Search

Even those who are in the know about SEO might not realise quite how far reaching it is. Only those who have been around since the very beginning can quite appreciate the full scope of the digital world, knowing how far we’ve come and why search has developed in the way it has. Who knew that SEO was such a complex topic?

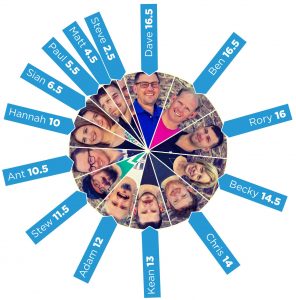

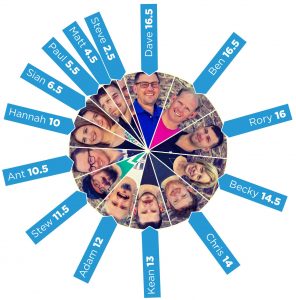

That’s why we’ve decided to go all out and share our own look back over the history and evolution of SEO and the world that surrounds it; the ups and downs the industry has been through, all the way back to the very beginning when search engines barely existed, and most certainly weren’t user-friendly, through the dark ages of search, right up to the sometimes confusing and ever fluctuating but far more sophisticated landscape we see today. And leading us on this adventure is one of the big players of the SEO world himself, Dave Naylor, Director of Digital here at Bronco, pioneer of search and revealer of new digital techniques all the way back to 1995, but back then known as DaveN…

Watch the History of SEO in under 5 minutes or scroll down to read more about it:

That’s why we’ve decided to go all out and share our own look back over the history and evolution of SEO and the world that surrounds it; the ups and downs the industry has been through, all the way back to the very beginning when search engines barely existed, and most certainly weren’t user-friendly, through the dark ages of search, right up to the sometimes confusing and ever fluctuating but far more sophisticated landscape we see today. And leading us on this adventure is one of the big players of the SEO world himself, Dave Naylor, Director of Digital here at Bronco, pioneer of search and revealer of new digital techniques all the way back to 1995, but back then known as DaveN…

Watch the History of SEO in under 5 minutes or scroll down to read more about it:

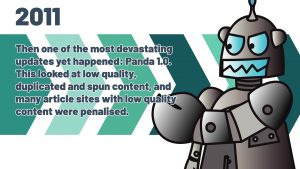

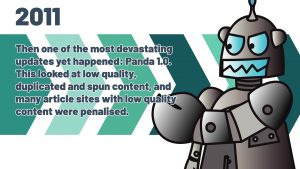

Jason Calcanis at Mahalo had claimed that they were ranking number 1 due to their use of Adsense – a tactic he firmly believed was absolutely acceptable in Google’s eyes, until Panda hit. Their visibility initially dropped 77% according to Searchmetrics; that stat later dropped to 92%.

Also in 2011, Google announced that Voice Search would begin to roll out. Around the same time, Apple’s version, Siri, launched.

Jason Calcanis at Mahalo had claimed that they were ranking number 1 due to their use of Adsense – a tactic he firmly believed was absolutely acceptable in Google’s eyes, until Panda hit. Their visibility initially dropped 77% according to Searchmetrics; that stat later dropped to 92%.

Also in 2011, Google announced that Voice Search would begin to roll out. Around the same time, Apple’s version, Siri, launched.

Penguin was Google’s next huge update that looked at links that they deemed to be manipulating search rankings, including obvious link building, spammy links, links in irrelevant content or over-optimised and exact match anchor text. This, of course, had a huge impact on those who had been using tactics like guest blogging, buying text links and more.

The Penguin algorithm has been iterated numerous times and it became more and more effective every time and, like Panda, it is now part of the core algorithm.

That’s why we’ve decided to go all out and share our own look back over the history and evolution of SEO and the world that surrounds it; the ups and downs the industry has been through, all the way back to the very beginning when search engines barely existed, and most certainly weren’t user-friendly, through the dark ages of search, right up to the sometimes confusing and ever fluctuating but far more sophisticated landscape we see today. And leading us on this adventure is one of the big players of the SEO world himself, Dave Naylor, Director of Digital here at Bronco, pioneer of search and revealer of new digital techniques all the way back to 1995, but back then known as DaveN…

Watch the History of SEO in under 5 minutes or scroll down to read more about it:

That’s why we’ve decided to go all out and share our own look back over the history and evolution of SEO and the world that surrounds it; the ups and downs the industry has been through, all the way back to the very beginning when search engines barely existed, and most certainly weren’t user-friendly, through the dark ages of search, right up to the sometimes confusing and ever fluctuating but far more sophisticated landscape we see today. And leading us on this adventure is one of the big players of the SEO world himself, Dave Naylor, Director of Digital here at Bronco, pioneer of search and revealer of new digital techniques all the way back to 1995, but back then known as DaveN…

Watch the History of SEO in under 5 minutes or scroll down to read more about it:

1994-5 – Search Engines – but not as we know them!

Pre-1994, virtual “libraries” such as Archie, VLib, Veronica and Jughead existed. These were indices of web servers with no or very little ability to search content. In 1994, Yahoo! launched as the first popular web directory; it was designed at this point to provide students with useful links, and webmasters had to manually submit pages for indexing. Webcrawler was the first crawler that indexed entire pages; Lycos was the first truly user friendly search engine; and Alta Vista was the biggest back then.

1995 – Enter stage right: DaveN

Google was still a far off dream in 1995, but here DaveN entered the industry. DaveN was Dave Naylor’s forum handle on WebmasterWorld where he conversed with other pioneers of the digital world such as Brett Tabke, Bruce Clay, Danny Sullivan and Googleguy – many years later, it was revealed that the latter was indeed none other than Matt Cutts himself.The OPIC score

Up until now, search engines operated using what webmasters were then calling an OPIC score – On Page Importance Criteria. Links weren’t a “thing” at this point – it was all keywords, keywords and, you guessed it, even more keywords – the type of thing we’d today call spam.Fun Fact!

DaveN’s very first “mild” black hat SEO discoveries happened around this time, before SEO was even a term that had been coined. He revealed to the small community the importance that Alta Vista placed on the simple Title Tag – so much so that if a secondary title tag was added with the keywords repeated, it was pretty much guaranteed the first position in their rankings. And so the era of Black Hat SEO began.1996 – The Pre-Google Era

Backrub, the predecessor to Google, was launched by Larry Page and Sergey Brin. This relied on inbound link relevancy and popularity, counting backlinks as “votes”. At this point, in addition to keyword stuffing, webmasters were starting to go over the top with spammy backlinks. Search engines were desperately trying to create algorithms to overcome these problems, but new practices searching for loopholes such as the above were moving too quickly for them to fix.

1997 – the term “SEO” crawled into existence

Several search engines existed in their infancy, as did optimisation and black hat tactics for a few years in the early 90s, before the term “SEO” was coined in 1997. Dave first heard this as part of the Webmasterworld forums. Ask Jeeves launched. But one big thing happened in 1997 that set the stage: Google.com was registered as a domain.

1998 – Enter stage left: Google

In 1998, Google officially launched and began the biggest revolution seen in the digital world yet. It offered better search results, and webmasters began to rely on Google above all other search engines. In a paper discussing the problems of large scale search engines, Page and Brin mentioned PageRank – the very technology Google used until September 2019 to rank results based around quality rather than OPIC factors. MSN Search, which launched just before Google in 1998, mostly still relied on on-page practices such as content, headings, tags, bold and italicised text. This was powered by Inktomi.Dave’s launch into SEO

While DaveN had been around in the industry for a few years on Webmasterworld, at this point he was drawn more directly into SEO through building an inkjet cartridge website for a friend. He was offered this opportunity as a commission based job which then opened the doors into the big wide world of SEO for Bronco. By the end of 1998, Dave was ranking Becky’s father’s local garage #1 for “Renault parts” and ranking another local company #1 for loft conversions.Quick Aside: Inktomi & the Google Dance

What was Inktomi? Inktomi was a search technology that provided services for search engines, and it was one of the biggest at the time. It had the huge advantage of having instantaneous updates when using PPC, meaning you could immediately see which tactics were working and which weren’t. The Google Dance Back in this era of SEO, the “Google Dance” was a big topic of conversation at least once a month. This was a period where the results in Google would suddenly go into flux for around 3 to 5 days before settling back down again as Google reorganised its rankings based on what had happened since the last Dance. If you landed in the number 1 position, you remained there until the next update around a month later. Predicting when the dance would begin and what would be affected was a tricky skill, but we need to make a quick hat tip here to Martin Schaedel (AKA LazerZubb) – one of the early members of WebmasterWorld and a good friend of Dave’s, who always knew when the dance would begin before anyone else, thanks to keeping an eye on larger sites who would begin to move first. It soon became apparent that there was a knack to beating the Google Dance, using Inktomi. Inktomi’s on-page algorithms almost cloned Google’s, meaning that at the end of a Google Dance period, you could easily use PPC on Inktomi to determine the necessary actions needed to be carried out before the next dance began.

The Wild West of SEO

During this time, SEO was a wild place to be. There were no major algorithm updates like the ones we know (and love…) today, but there were continuous Google updates and tweaks to try to manage the mad world of keyword spamming, directories, white text links, guest posts, paid exact match anchor text links, article syndication, and more. This style of black hat tactics were nothing new, and they actually provided good results for businesses at the time. However content was becoming less and less user friendly and search results were being muddied by whoever had the best spamming techniques. Something had to change.2003 – Florida: the first big update

In 2003, Florida hit. This was the first catastrophic Google update that targeted highly commercial sites. The exact algorithm for it is still unknown, but it used what experts call an SEO filter which picked up keywords likely to be spammed and put those sites through the filter. This resulted in high shake ups in the search results. This was also the year that Bronco was established, but not as you know us today – we were originally a small ISP and Web Development agency, doing small scale SEO for some local clients.2005 – Nofollow: Google attempts to fight comment spam

Comment spam was a big problem around this time, and Google introduced something new to tackle it: the rel=”nofollow” attribute. This was an innovative way for Google to know which links to discount for abusing public areas online. Personalised search also launched this year. It was a big Google update that didn’t hugely affect businesses in search rankings but did change the landscape of search engine usability. A user might receive different results to the person sat next to them based on their search history.2008 – Google Suggest

This took personalised search a step further and drew on users’ previous queries to offer popular search queries in a dropdown.2009 – Bing

People were beginning to cry out for a new search engine, fearing that all their eggs were in one basket with Google. And so Bing entered the scene in 2009 offering an alternative, simply because it’s not Google.2010 – Caffeine update

While Caffeine wasn’t necessarily Google’s “biggest” update, it was certainly one of the most important ones that allowed Google to offer far fresher results than ever. Caffeine was a new indexing system which first looked at the sites in the “fresh category” – if it had recently been updated or was a new page – then moved onto older pages, meaning that new content was indexed more quickly. This rewarded sites with news stories but had little direct impact on rankings. Each year from here on out has seen a new major change in Google’s algorithm; their ability to rank sites is becoming far more sophisticated, drawing a distinct line in the sand between them and search engines that came before. The landscape of SEO had moved away from multiple search engines to focus primarily on one single engine: Google.

2011 – Panda 1.0

In early 2011, a round of unnatural link warnings went out to webmasters – this was the shockwave before the Panda 1.0 (originally known as Farmer) earthquake hit in February 2011, followed by Panda 2.0 in April, and multiple Panda updates that have reverberated through the industry in the years that followed. Panda is a Google update that focuses on content, looking for duplicate or spun and low-quality content, keyword stuffing and spam. It was originally a “filter” but has, as of 2016, been incorporated into Google’s core algorithm. To highlight just how devastating Panda was to those using shady tactics in the SEO industry, you need look no further than Mahalo.com: Jason Calcanis at Mahalo had claimed that they were ranking number 1 due to their use of Adsense – a tactic he firmly believed was absolutely acceptable in Google’s eyes, until Panda hit. Their visibility initially dropped 77% according to Searchmetrics; that stat later dropped to 92%.

Also in 2011, Google announced that Voice Search would begin to roll out. Around the same time, Apple’s version, Siri, launched.

Jason Calcanis at Mahalo had claimed that they were ranking number 1 due to their use of Adsense – a tactic he firmly believed was absolutely acceptable in Google’s eyes, until Panda hit. Their visibility initially dropped 77% according to Searchmetrics; that stat later dropped to 92%.

Also in 2011, Google announced that Voice Search would begin to roll out. Around the same time, Apple’s version, Siri, launched.

2012 – Penguin

2012 – Penguin

Penguin was Google’s next huge update that looked at links that they deemed to be manipulating search rankings, including obvious link building, spammy links, links in irrelevant content or over-optimised and exact match anchor text. This, of course, had a huge impact on those who had been using tactics like guest blogging, buying text links and more.

The Penguin algorithm has been iterated numerous times and it became more and more effective every time and, like Panda, it is now part of the core algorithm.

2013 – Hummingbird & Paid Links

Hummingbird was an innovative new algorithm that gave Google the ability to parse search queries and interpret them rather than looking at exact keywords using natural language processing including semantic phrasing and synonyms. This was not a penalty based update, so it gave webmasters a break to recover from their Panda and Penguin related issues. Google also sent out a “reminder” in 2013 that selling follow links in order to influence PageRank violated their quality guidelines and would result in penalisations. They stated here that all paid links should be marked with the nofollow attribute. Links schemes have always been against Google’s Webmaster Guidelines, however it was around this time when they got really serious about it.2014 – Pigeon & the Rise of Social Media

Pigeon was the big update of 2014. This looked at Google’s local search algorithm with the intent to tie it more closely to the core algorithm, focusing on users’ location. Google also stated this year that social signals such as metrics on Facebook and Twitter do not affect search rankings. Nevertheless, social media’s indirect influence on rankings was starting to become more and more important; the big social platforms – Facebook, Twitter, Instagram, YouTube, LinkedIn and Pinterest – were now well established. They had become their own search engines. Also in 2014, Amazon leapt onto the voice search bandwagon, launching their Alexa.2015 – Mobile Friendly

As mobile searches continued to increase, it was key that Google got on top of this – delivering poor quality mobile sites to users was unhelpful – so they launched the Mobile update. Pages that had not been optimised for mobile were filtered out of SERPs.

2016 – RankBrain & Penguin 4.0

Google called RankBrain their third most important ranking factor. It was an offshoot of Hummingbird, expanding Google’s Knowledge Graph and helping Google better understand intent behind queries. While many iterations of Penguin had rolled out since 2012, this was the last one – in September 2016, Penguin became part of the core algorithm which, at that time, had more than 200 unique “signals”. It now works in real time so that changes are visible immediately.2017 – Fred

2017 was a relatively quiet year, aside from people seeing drops in traffic in early March – up to 90% decreases almost overnight. This was later known as the “Fred” update and was not announced by Google, nor has it ever been confirmed. Most believe the motive behind Fred was to target sites with aggressive monetization tactics as the user experience on these was very low.2018 – Mobile First Indexing & E-A-T

In early 2018, the mobile-first strategy that Google had been working towards since 2015 was officially rolled out. Mobile traffic was now starting to surpass traffic coming from users on desktops for the first time ever. With their E-A-T broad core algorithm update, Google announced that they were rewarding sites that adhere to their Expertise, Authoritativeness and Trustworthiness guidelines. This was also known as the Medic update as it targeted sites, as stated by Google, that they believed were detrimental to “your money or your life” such as fear-mongering health and financial advice sites.2019 – BERT & nofollow updates

Potentially the biggest leap forward in search technology in the past 5 years, BERT (the Bidirectional Encoder Representations from Transformers) allowed Google to understand far more complex queries considering how the sequence of the wording related to the intent behind it. As such, conversational questions can be better understood. Google introduced a big change to the nofollow attribute in 2019. After 14 years, they have brought in new attributions – “sponsored” and “ugc”. These can be used in combination with one another, but all paid links must have at least one of these attributes.Today & the Future

2020 – Favicon & Ad Labelling Controversy

2020 has only just begun and we’ve already had our first big Google update – the January 2020 Core Update rolled out earlier this month and brought with it a new look for Google’s SERPs, but not one that was particularly well received. The new layout with the URL at the top of the search result alongside a favicon made it unclear which results were ads and which weren’t. As a result of feedback like ours, Google has withdrawn this and is now experimenting with new favicons placements.The Voice Revolution

The rise of voice search is still increasing – one (much quoted!) stat says that by 2020, voice search may account for up to 50% of all online searches. It is important to aim for Google’s featured snippets, also known as “position zero” and to really get into the head of your consumers – the nature of voice search means that search queries are longer and more conversational.Nofollow Attributes

As of 1st March 2020, nofollow along with the other new attributes may be used as “hints” for ranking, crawling and indexing, whereas before, Google stated that they were completely discounted. Where the nofollow was originally introduced to tackle comment spam, this is now outdated and doesn’t cover the full scope of links. By viewing nofollow attributes as hints, Google can better understand and incorporate the signals.