The Need For Speed in the ECommerce World

In early 2017, we found that site speed had become a real issue for one of our own clients following the launch of their new website. Although more aesthetically pleasing, the new site resulted in both a drop in page load times and poor user experience, impacting both search and sales.

By mid March, the Google Page Speed score was a lowly 41/100 on desktop, and we had started to notice the site slowing down. The back and forth between SEO and Developers had begun.

Questions like “How long do users stay on a web page?” and “Does site speed really affect SEO?” have plagued UX designers and SEOs for many years. So what’s the answer?

Google in 2010 :

“You may have heard that here at Google we’re obsessed with speed, in our products and on the web. As part of that effort, today we’re including a new signal in our search ranking algorithms: site speed. Site speed reflects how quickly a website responds to web requests.”

Jakob Nielsen in 2011 said :

“Users often leave Web pages in 10–20 seconds, but pages with a clear value proposition can hold people’s attention for much longer. To gain several minutes of user attention, you must clearly communicate your value proposition within 10 seconds.”

Initial On-Site Changes

In June 2017, all of the image optimisation was completed on the site. Our client’s development company ran several tests – no significant improvements to page load speed were noted. This meant it was time to re-run some of the tests we had done in the past.

The development company changed the products per page from 48 to 24, but still it felt slow, most notably in the site search box due to how it functioned at the time. The Google Page Speed score was now sitting at 63/100 – a marginal improvement.

The Influence of AdWords

A paid search campaign was running alongside our SEO efforts, and a Google Adwords representative had notified us that Google had now flagged the site as SLOW, and offered the following advice:

- Minimise HTML in CDN: (also flush cdn cache).

Low effort – medium reward - Reduce number of requests: CSS sprites: combined images.

Low effort – medium reward - Leverage browser caching: as mainly external resources. Cron to fetch and store on server.

High effort – medium reward - Eliminate CSS above the fold: Server Header CSS, (However this would mean body content initially displayed un-styled, which would give a poor UX. )

High effort – medium reward

Points 1-3 were implemented, and the Google Page Speed score advanced to 78/100 for desktop.

Digging In To Server Logs

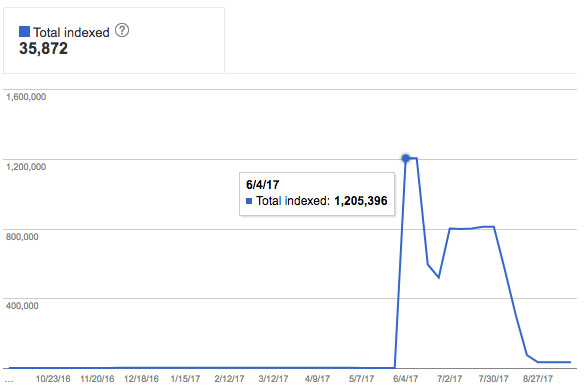

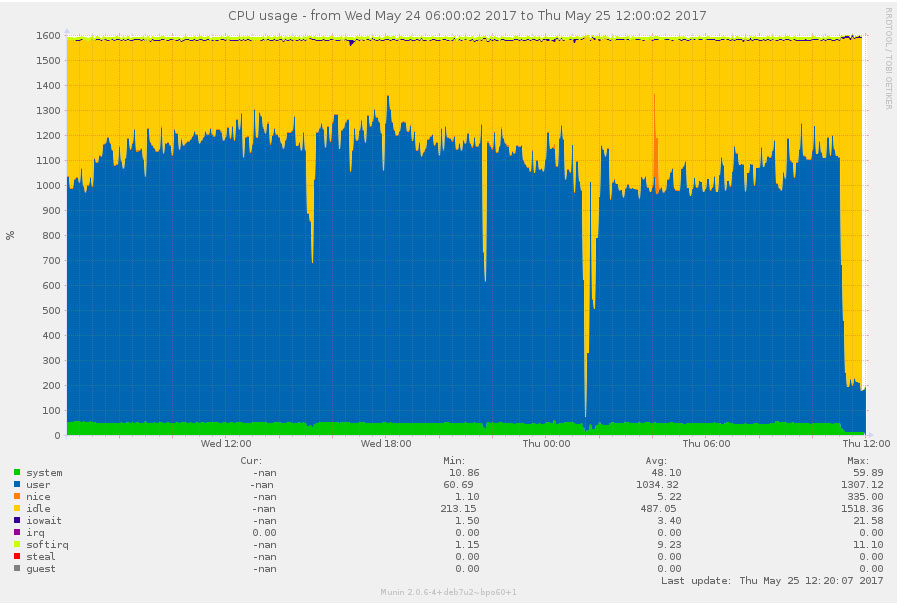

Once we requested server logs, it became very clear what the issue was. The extremity of this is showed in the below image, where overnight, Google had navigated its way into the clients faceted search and started to index all of their filter page URLs (as seen in the image above).

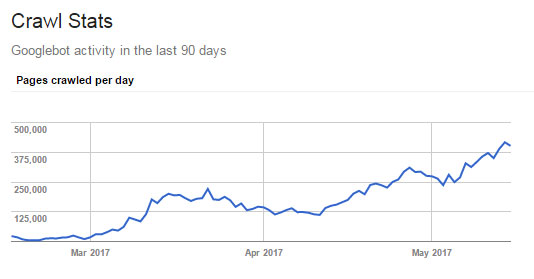

Supporting the above, the below image shows the crawl stats as taken from the clients Search Console account. We can see here that from March to May, there was an uncertain, but positive growth in pages being crawled by Google on a daily basis. The impact of the amount of pages crawled became pretty severe, particularly in June, and required further action:

After further discussions, it was highlighted by the server company that the server had all of a sudden, started being put under a constant 70% load, which would explain why the time to first byte was over 5 seconds, and why pages were taking around 20s on average to fully load.

Controlling Google’s Crawl Rate

After some internal discussions, we started to whittle down the relevant actions in order to control Google’s crawl rate as effectively as possible, without these changes being detrimental to the current visibility of the site. This led us down the following routes, keeping in mind that these faceted URLs at current were NoIndex,Follow:

- Ensure all the canonical tags were correct and were behaving as intended.

- Nofollow all the pagination links on any category or search page.

- Implement a NoIndex,Nofollow tag on the actual facet pages (anything containing the query parameter).

- Amend the parameter handling in Google’s Search Console, this including the ordering of product pages, and also if it’s ascending or descending.

The main issue with all the above actions was that once Google’s indexer Caffeine (not to be confused with GoogleBot) has 1.2million URLs, there is only one way to stop or slow it down, and it isn’t with onpage measures.

Robots.txt vs Meta Robots

From Google: https://webmasters.googleblog.com/2007/03/using-robots-meta-tag.html

- If you block a page with robots.txt, Googlebot will never crawl the page and will never read any meta tags on the page.

- If you allow a page with robots.txt but block it from being indexed using a meta tag, Googlebot will access the page, read the meta tag, and subsequently not index it.

Valid meta robots content values

Googlebot interprets the following robots meta tag values:

- NOINDEX – prevents the page from being included in the index.

- NOFOLLOW – prevents Googlebot from following any links on the page. (Note that this is different from the link-level NOFOLLOW attribute, which prevents Googlebot from following an individual link.)

*DEBATABLE WHETHER THIS IS TRUE OR NOT - NOARCHIVE – prevents a cached copy of this page from being available in the search results.

- NOSNIPPET – prevents a description from appearing below the page in the search results, as well as prevents caching of the page.

- NOODP – blocks the Open Directory Project description of the page from being used in the description that appears below the page in the search results.

- NONE – equivalent to “NOINDEX, NOFOLLOW”.

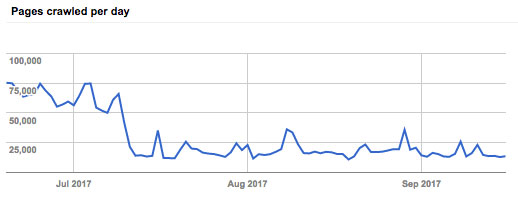

After further discussions with the client about crawl budget, we opted to handle all faceted pages through the robots.txt file, blocking from the facet parameter, and blocking all filter pages from Google. The following image shows just how effectively this was reined in following the blocking:

Page Speed Improvements: Results

The 3 key positive results we noticed following these page speed improvements were:

- Considerable improvements to page load times and conversions through the website.

- An increase in website rankings across the board, which is excellent considering their competitive ecommerce search environment.

- Acquisition of Google answer boxes and imagery – albeit with a little persuasion.

We’re always looking for the next challenge! Is your website’s speed affecting your marketing performance? Get in touch with our team of experts today to find out how we can help.